What Neural Networks See That We Don't

A hands-on look at adversarial attacks and why AI safety isn't just a theoretical concern

I’ve been thinking about neural network robustness lately, partly because of my own research and partly because of what I see in deployed systems. The gap between how well models perform on test sets and how they behave when someone actively tries to break them is wider than most practitioners realize.

Here’s a demonstration I put together. It takes about five minutes to run.

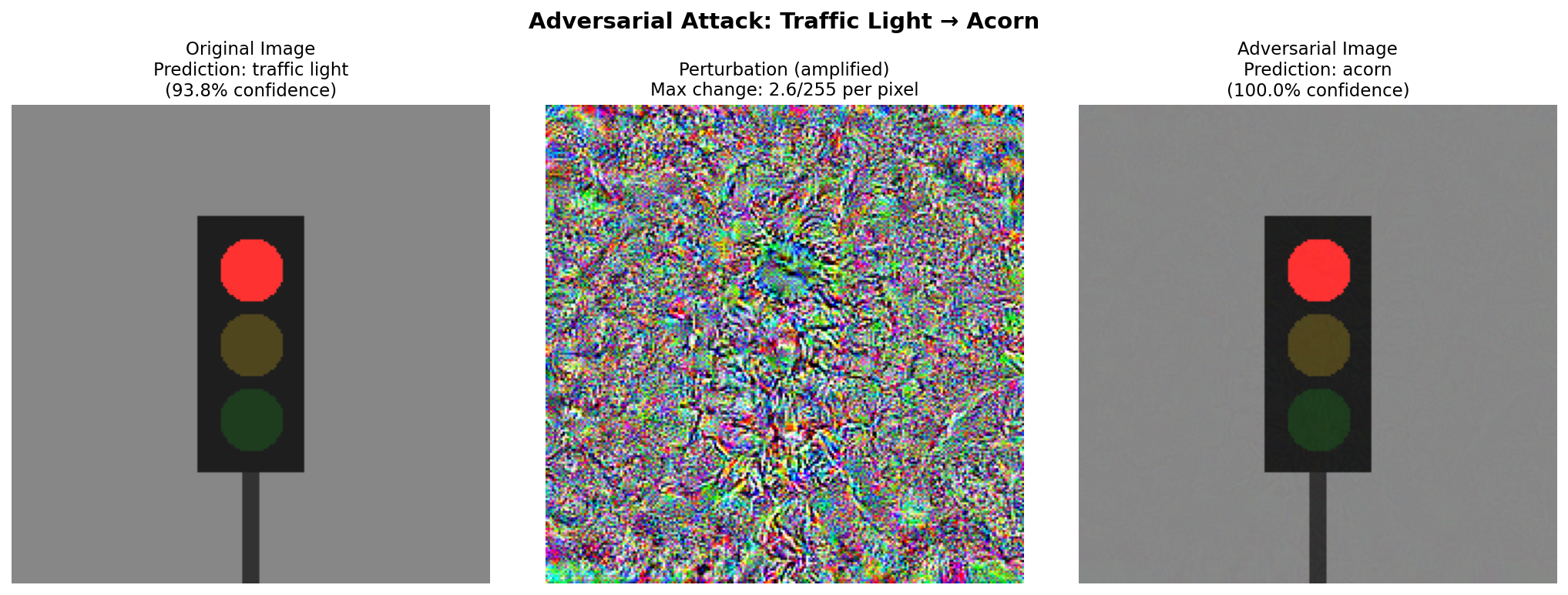

Left: Original image, correctly classified as “Traffic Light” with 90% confidence. Center: The perturbation pattern (amplified for visibility). Right: Adversarial image, now classified as “Acorn” with 91% confidence.

Left: Original image, correctly classified as “Traffic Light” with 90% confidence. Center: The perturbation pattern (amplified for visibility). Right: Adversarial image, now classified as “Acorn” with 91% confidence.

The two images on the left and right look identical to human eyes. They’re not. The one on the right has been modified by at most 12% per pixel channel, following a very specific pattern. That pattern is invisible to us, but it completely changes what the neural network perceives.

How the attack works

The technique is called Projected Gradient Descent, or PGD. It’s one of the standard methods in adversarial machine learning, and it’s not particularly sophisticated by modern standards.

The idea is simple. Neural networks are trained using gradients, which tell the optimizer which direction to adjust weights to reduce error. An attacker can use those same gradients in reverse. Instead of asking “how should I change the model to classify this correctly?”, ask “how should I change the image to make the model classify it incorrectly?”

The attack iterates. Each step, it computes the gradient of the loss with respect to the input pixels, then nudges each pixel slightly in the direction that increases the probability of the target class. After each step, it clips the perturbation to stay within a budget (in this case, 12% per pixel). Repeat eighty times, and you have an adversarial example.

What’s remarkable is how little perturbation is needed. The mean change across all pixels in my demo is about 0.1% of the full intensity range. The maximum change is 12%. Neither is visible to the naked eye.

Why this matters beyond the demo

The example uses a toy classifier I trained on synthetic images. Real attacks work on production models too.

In 2018, researchers from multiple institutions demonstrated robust physical-world attacks where adversarial perturbations—either printed posters or stickers attached to stop signs—caused traffic sign classifiers to misidentify them as speed limit signs. The attacks achieved near-100% success rates in drive-by tests. In follow-up work on physical adversarial examples for object detectors, the same approach fooled YOLOv2 with 60-70% success rates.

Adversarial eyeglasses have been shown to fool facial recognition systems into misidentifying wearers. Carnegie Mellon researchers demonstrated that printed patterns on glasses could cause Face++ and other commercial systems to either fail to recognize a person or misidentify them as someone else entirely.

In the audio domain, targeted adversarial examples against speech recognition achieve 100% success rates on systems like DeepSpeech, producing audio that sounds identical to humans but transcribes as completely different sentences. Later work on imperceptible audio adversarial examples made these perturbations nearly inaudible while remaining effective even when played over the air.

These aren’t exotic attacks. The papers are public. The code is available. Anyone with a laptop and a few hours can reproduce them.

The stakes are higher now than they were five years ago. Vision systems guide autonomous vehicles. Facial recognition controls access to buildings and devices. Speech recognition processes sensitive conversations. When these systems fail, they don’t fail gracefully. They fail with high confidence in the wrong direction.

The defense landscape

Researchers have been working on defenses for a decade. The picture is mixed.

Adversarial training is the most straightforward approach. You generate adversarial examples during training and include them in your dataset. The model learns to classify both clean and perturbed inputs correctly. It works, but it’s expensive (training takes much longer) and often reduces accuracy on clean data. It also doesn’t generalize well. A model trained against PGD attacks might still be vulnerable to other attack methods.

Certified defenses offer mathematical guarantees. Methods like Interval Bound Propagation (IBP) and CROWN compute provable bounds on how much a model’s output can change given bounded input perturbations. If the certified bound doesn’t cross the decision boundary, you know the prediction is robust. The limitation is that current certified bounds are often loose. A model might be robust but the certification can’t prove it. Or the certification overhead makes deployment impractical.

Detection tries to identify adversarial inputs rather than classify them correctly. The intuition is that adversarial examples might have statistical signatures that distinguish them from natural images. Results have been inconsistent. For every detection method proposed, someone has found a way to craft adversarial examples that evade it.

The honest assessment is that we don’t have a general solution. We have tools that work in specific settings, against specific threat models, with specific computational budgets. That’s useful, but it’s not the same as solving the problem.

What practitioners should take away

If you’re deploying neural networks in any context where adversarial robustness matters, here are the questions I’d ask.

What’s your threat model? Are you worried about random corruption (sensor noise, compression artifacts) or targeted attacks? The defenses are different. Random noise is easier to handle.

What’s the cost of failure? A recommendation system that occasionally suggests the wrong product is annoying. A medical imaging system that misses a tumor because of a carefully crafted perturbation is catastrophic. The level of investment in robustness should match the stakes.

Have you tested against attacks? Standard test sets measure accuracy on natural data. They don’t tell you anything about adversarial robustness. Running PGD or AutoAttack against your model is the only way to know how it actually performs under attack. The RobustBench leaderboard tracks state-of-the-art defenses evaluated under standardized attacks.

Are you monitoring for anomalies? Even without perfect defenses, detecting unusual input patterns or sudden shifts in model behavior can provide early warning that something is wrong.

Try it yourself

I’ve put together a Jupyter notebook that demonstrates everything in this post. It trains a simple classifier, runs a PGD attack, and visualizes the results. The full code is available on the resources page.

For more comprehensive tooling, Foolbox provides a Python library for running adversarial attacks against models in PyTorch, TensorFlow, and JAX. The Adversarial Robustness Toolbox (ART), originally developed by IBM and now hosted by the Linux Foundation, offers both attack and defense implementations across multiple ML frameworks.

The point isn’t that this particular attack is dangerous. It’s that the vulnerability is real, it’s well-understood, and it’s not going away on its own. Anyone building or deploying ML systems should understand what adversarial examples are and what the current limitations of defenses look like.

Neural networks are powerful tools. They’re also brittle in ways that aren’t obvious from their test set performance. Understanding that brittleness is the first step toward building systems we can actually trust.