vnvspec, Machine-Readable Specifications for AI Systems

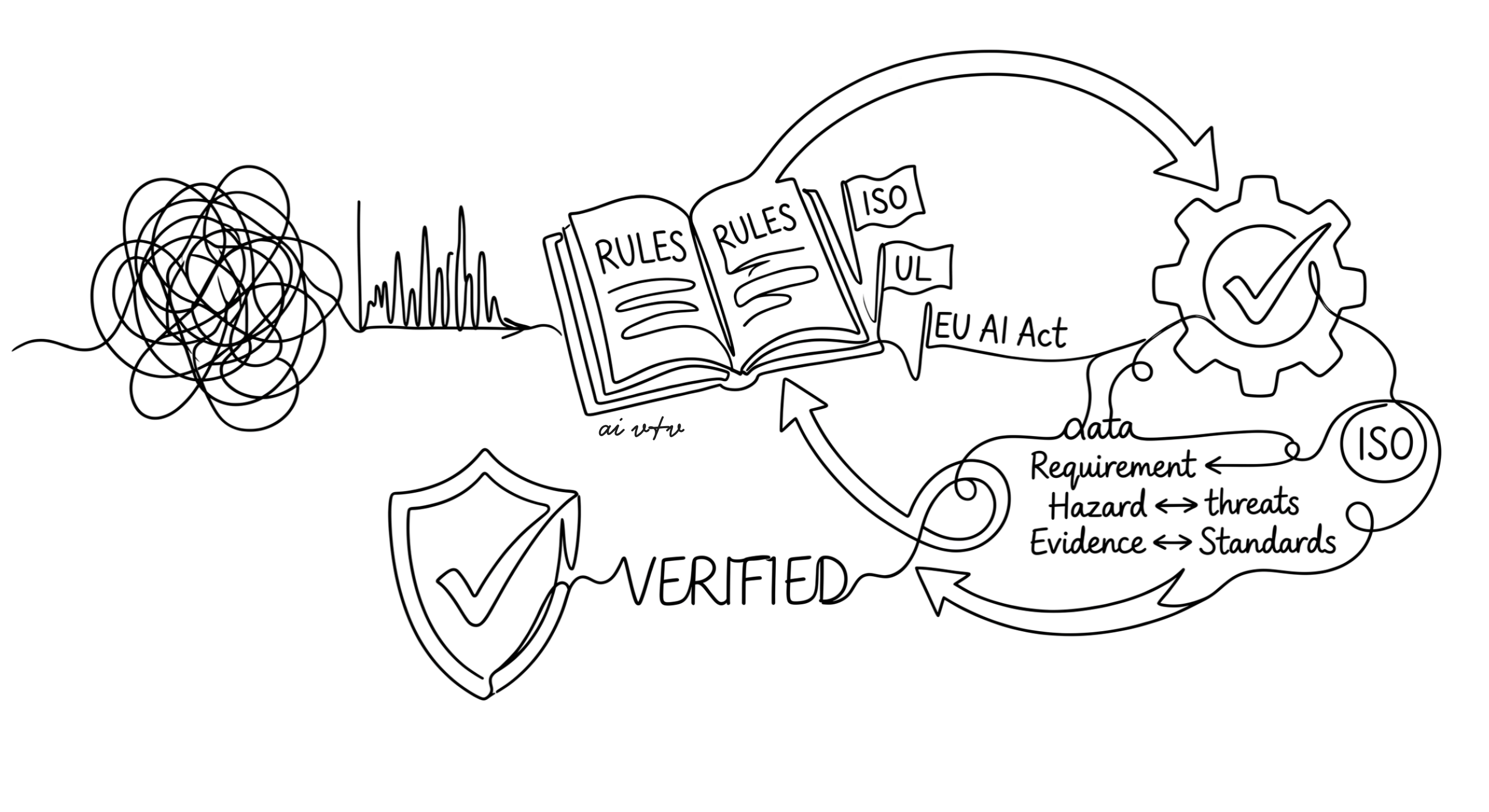

Most AI projects we encounter have something that looks like a specification. It might be a slide deck of acceptance metrics, a confluence page of accuracy thresholds, or a paragraph in a project charter. What it usually is not is something a machine can parse, check, or trace through to evidence and back to a clause in a safety standard. That gap between “we wrote down what the model should do” and “we have auditable evidence that it does” is where most assurance work goes to die.

This week we are releasing vnvspec, an open-source Python library from our lab that closes that gap. vnvspec lets you express requirements, input-output contracts, operational design domains, hazards, and evidence as typed objects, then assess models against them and emit traceable reports that align with ISO/PAS 8800, ISO 21448 (SOTIF), UL 4600, the EU AI Act, and the NIST AI Risk Management Framework. The full documentation lives at ai-vnv.github.io/vnvspec.

Why a specification library

Writing requirements well is hard, and writing them so that automated tooling can use them is harder. Anyone who has tried to verify a deployed model against a vague statement like “the system should be reliable in adverse weather” knows the problem. The statement is not testable, the boundaries of “adverse weather” are not defined, and there is no link from that sentence to any operational evidence the team will eventually need to produce.

The systems engineering community solved a version of this problem decades ago. Standards like INCOSE’s Guide to Writing Requirements (GtWR) codify what a good requirement looks like, and safety-critical industries from aerospace to automotive have built whole assurance cultures around traceable, verifiable requirement structures. AI development largely skipped that step. The result is a deployment landscape where the distribution shift problem and the absence of structured assurance compound each other.

vnvspec brings the systems engineering discipline into the AI development loop without forcing teams to abandon the Python tooling they already use. A requirement is a Pydantic model. A specification is a container of requirements. Quality checks, traceability graphs, and standards mappings are methods on those models. The whole thing fits inside the same pip install flow as PyTorch and HuggingFace.

The core abstractions

The library is built around a small number of typed objects, each of which corresponds to something a V&V engineer would already recognize.

A Requirement is the atomic unit. It carries a shall-language statement, a rationale, a priority, a verification method drawn from the classical set of test, analysis, inspection, demonstration, or simulation, and a list of acceptance criteria. It also carries a standards field that maps the requirement to specific clauses in the standards registries vnvspec ships with.

from vnvspec import Requirement, Spec

req = Requirement(

id="REQ-001",

statement="The classifier shall produce probabilities in [0, 1].",

rationale="Probability outputs must be valid for downstream calibration.",

verification_method="test",

acceptance_criteria=["All output probabilities are between 0.0 and 1.0 inclusive."],

standards={"iso_pas_8800": ["6.2.1"], "eu_ai_act": ["Annex IV.2(b)"]},

)

spec = Spec(name="image-classifier-v1", requirements=[req])

An IOContract describes the shape and invariants of a model’s inputs and outputs. An ODD (operational design domain) describes the conditions under which the model is expected to operate. A Hazard describes a way the system can fail or cause harm. An Evidence object records a verification activity, what was tested, how, and what the outcome was. TraceLink and build_trace_graph() connect these objects into a graph that can be queried, visualized, and exported.

The design is deliberately conservative. Each object is something a safety engineer working under ISO/PAS 8800 or UL 4600 would expect to find. The novelty is not in the conceptual model but in making it ergonomic, typed, and Python-native, so that ML practitioners can author and check it without leaving their normal development environment.

Catching bad requirements before they cost you

One of the most common failure modes in AI assurance is the requirement that looks fine on a page but cannot actually be tested. vnvspec’s Requirement.check_quality() method runs a battery of INCOSE GtWR rule checks and returns a list of violations.

req = Requirement(id="REQ-001", statement="The system should work.")

violations = req.check_quality()

for v in violations:

print(f"{v.rule}: {v.message}")

This catches the recurring offenders, missing shall-language, vague qualifiers like “should” or “may”, absent rationale, missing acceptance criteria, ambiguous quantifiers, and so on. Running this check on the requirement set early in a project surfaces the unverifiable statements before they propagate into test plans, dashboards, and stakeholder briefings.

We have found this kind of automated rigor especially valuable when working with teams that are new to formal V&V. The library does not lecture; it simply returns a structured list of issues, and the team learns the patterns of good requirement writing through their own iteration.

Adapters and auto-generated tests

A specification that lives in a vacuum is a documentation artifact. A specification that talks to your model is an assurance asset. vnvspec supports this through a ModelAdapter protocol with concrete implementations for PyTorch and HuggingFace, and an extensible interface for scikit-learn, ONNX, Pyomo optimization models, and FMI simulation models.

Once a model is wrapped in an adapter, vnvspec can auto-generate pytest cases and Hypothesis property tests directly from the requirements and IO contracts. The generated tests check the kinds of properties that matter for assurance, output domain validity, monotonicity where required, robustness within an ODD, and behavior at hazard-relevant edge cases. The same specification then drives GitHub Actions integration so that requirement violations show up in CI alongside ordinary unit-test failures.

This is where the library starts to pay back the investment of writing things down properly. The specification is no longer a parallel artifact maintained by hand. It becomes the source from which tests, reports, and assurance documents are generated.

Standards mapping and exports

The standards registries are the part of vnvspec that connects most directly to the regulatory and certification work happening across our region and beyond. Each registry contains a clause database for one of the major standards in our scope, and any requirement can reference one or more clauses across one or more standards.

| Standard | Domain | Why it matters |

|---|---|---|

| ISO/PAS 8800 | Safety and AI for road vehicles | The emerging baseline for AI in automotive safety |

| ISO 21448 (SOTIF) | Safety of the intended functionality | Covers performance limitations, not just system faults |

| UL 4600 | Evaluation of autonomous products | Outcome-based safety case structure |

| EU AI Act | High-risk AI systems in the EU | Legally binding from 2026 onward |

| NIST AI RMF | Voluntary AI risk management | Reference framework cited widely in procurement |

When the assessment runs, vnvspec emits HTML reports, Markdown summaries, Goal Structuring Notation (GSN) assurance cases rendered as Mermaid diagrams, and EU AI Act Annex IV technical documentation skeletons. The same evidence can therefore answer a regulator, a safety case reviewer, and an internal engineering audit without redundant work.

Where this fits in our research program

vnvspec sits inside a broader research agenda we have been building at the AI V&V Lab. Our certified robustness work on supply chain DNNs, our verification experiments on neural network reachability, and our editorial work at OSCM all point in the same direction. AI systems destined for high-stakes deployment need the same kind of structured, traceable, standards-aligned assurance that aerospace and automotive systems have had for decades, and the tooling for that assurance has to be ergonomic enough that ML teams will actually use it.

The library is also designed with Vision 2030 in mind. Saudi Arabia’s investments through SDAIA and adjacent agencies are pushing AI into transport, logistics, and critical infrastructure on a timeline that does not allow for ad-hoc assurance practices. A machine-readable specification layer that maps to international standards is, in our view, a piece of the public-good infrastructure that this transition needs. Open-sourcing it under Apache 2.0 reflects that intent.

Looking for collaborators

vnvspec ships with a deliberately scoped first release, and we are actively looking for collaborators to extend it in two directions where we know the demand exists but our lab cannot cover the ground alone.

The first direction is integration with neural network verifiers. The current adapter layer wraps models for assessment against requirements, but it does not yet plug into the formal verification toolchain. We want vnvspec specifications to drive α,β-CROWN, auto_LiRPA, Marabou, ERAN, NNV, and similar tools, so that a robustness or reachability requirement can be discharged by a verifier and the resulting certificate flows back into the evidence graph automatically. We have started this work for our own certified newsvendor experiments, and we would welcome contributors who maintain or use these verifiers in their own research.

The second direction is standards coverage. The five registries that ship today address AI-specific and autonomy-adjacent standards, and there are several adjacent regimes that vnvspec does not yet cover. The notable gaps include ISO 26262 for automotive functional safety, IEC 61508 for general functional safety, DO-178C and DO-254 for avionics software and hardware, DO-326A for airworthiness security, IEC 62304 and ISO 13485 for medical software and devices, the FDA’s predetermined change control plan guidance for AI/ML-enabled SaMD, ISO/IEC 42001 for AI management systems, ISO/IEC 23894 for AI risk management guidance, and the IEEE 7000 series on ethically aligned design. Each of these has its own clause structure, terminology, and assurance vocabulary, and getting the registry right requires domain expertise we do not pretend to have across all of them.

A related effort we want to start is a public catalog of requirements published and shared as best practices across engineering subfields. Power systems, oil and gas, water treatment, rail, maritime, and process industries each have decades of accumulated requirement patterns, often locked inside proprietary documents or organizational tribal knowledge. A shared, versioned catalog of high-quality requirement templates, with proper attribution and licensing, would lower the barrier to authoring good specifications in domains where AI is now being introduced. We are interested in working with practitioners in those subfields who want to contribute templates, with academic groups building benchmark requirement sets, and with standards bodies and professional societies whose members already carry this knowledge informally. The catalog interface in vnvspec is the technical hook for that work, and we expect it to evolve substantially as contributions come in.

If any of this overlaps with what you are working on, the GitHub issues page is the right place to start a conversation, and our lab’s collaboration channels are listed at ai-vnv.kfupm.io.

Trying it out

Installation is the standard pip install vnvspec for the core library, with optional extras for the PyTorch adapter and the documentation tooling. The repository contains worked examples and notebooks; the concepts documentation walks through the full type system; and the standards section provides clause-by-clause reference material for each registry.

The deeper goal here is to turn V&V from a downstream gate into an upstream design discipline. Every requirement that is machine-readable, every link to a standards clause that is explicit, and every piece of evidence that is automatically generated rather than manually compiled is a small piece of confidence that AI systems deployed in the real world will behave the way we said they would.